Dynamic occlusion on Quest 3 is currently only supported in a handful of apps, but now it’s higher quality, uses less CPU and GPU, and is slightly easier for developers to implement.

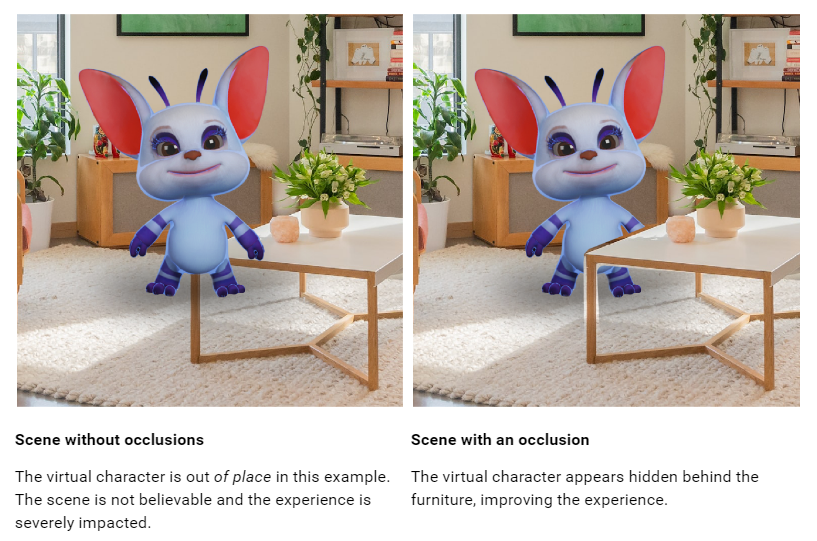

Occlusion refers to the ability of virtual objects to appear behind real objects, a crucial capability for mixed reality headsets. Doing this for only pre-scanned scenery is known as static occlusion, while if the system supports changing scenery and moving objects it’s known as dynamic occlusion.

Quest 3 launched with support for static occlusion but not dynamic occlusion. A few days later dynamic occlusion was released as an “experimental” feature for developers, meaning it couldn’t be shipped on the Quest Store or App Lab, and in December that restriction was dropped.

Developers implement dynamic occlusion on a per-app basis using Meta’s Depth API, which provides a coarse per-frame depth map generated by the headset. Integration is not easy. As a result, very few Quest 3 mixed reality apps currently support dynamic occlusion. As such, very few Quest 3 mixed reality apps currently support dynamic occlusion.

Another problem with dynamic occlusion on Quest 3 is that the depth map is very low resolution, so you’ll see an empty gap around the edges of objects and it won’t pick up details like the spaces between your fingers.

With v67 of the Meta XR Core SDK, though, Meta has slightly improved the visual quality of the Depth API and significantly optimized its performance. The company says it now uses 80% less GPU and 50% less CPU, freeing up extra resources for developers.

To make it easier for developers to integrate the feature, v67 also adds support for easily adding occlusion to shaders built with Unity’s Shader Graph tool, and refactors the code of the Depth API to make it easier to work with.

I tried out the Depth API with v67 and can confirm it provides slightly higher quality occlusion, though it’s still very rough. But v67 has another trick up its sleeve that is more significant than the raw quality improvement.

UploadVR trying out Depth API with hand mesh occlusion in the v67 SDK.

The Depth API now has an option to exclude your tracked hands from the depth map so that they can be masked out using the hand tracking mesh instead. Apple Vision Pro, on the other hand, has dynamic occlusion that only affects your arms and hands. This is because the masking process works the same as Zoom, which masks out your face. Apple Vision Pro has dynamic occlusion only for hands and arms, because it masks them out the same way Zoom does rather than generating a depth map.